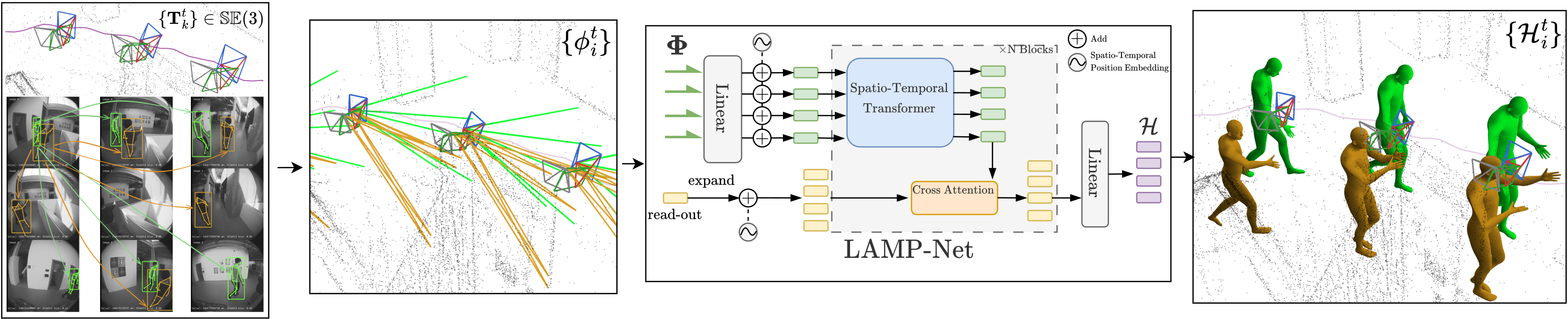

Method Overview

LAMP employs an early world-space ray lifting paradigm to track multiple people over time from a multi-camera headset. Starting from individual images, the method detects 2D bounding boxes and keypoints per person, associates them across cameras and time, then back-projects the 2D keypoints into posed 3D rays using the known camera calibration and 6-DoF device poses. The resulting spatio-temporal ray cloud is processed by LAMP-Net, a spatio-temporal transformer that outputs SMPL body motion parameters for each tracked person at each timestamp.